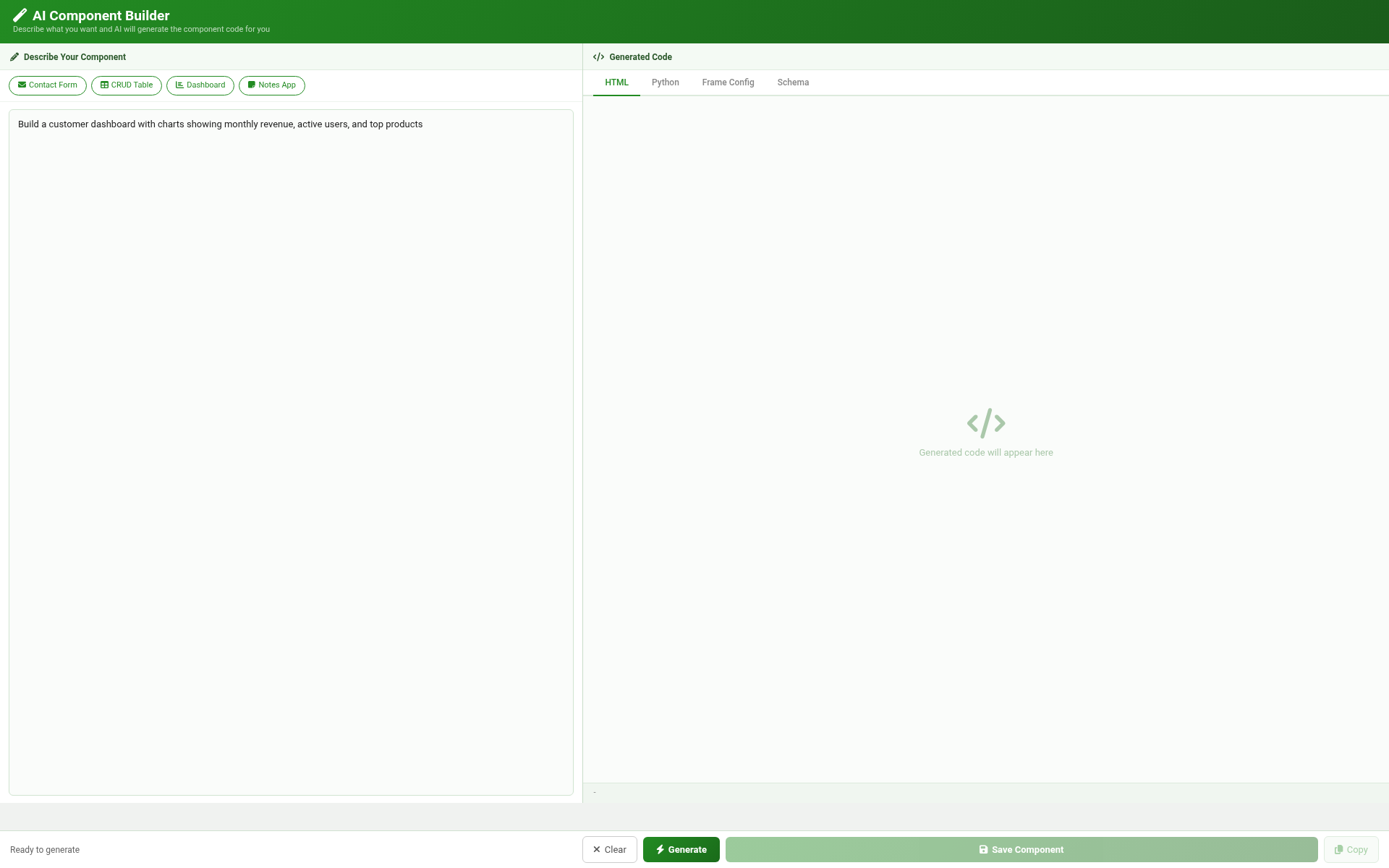

Natural-language prompt → three records.

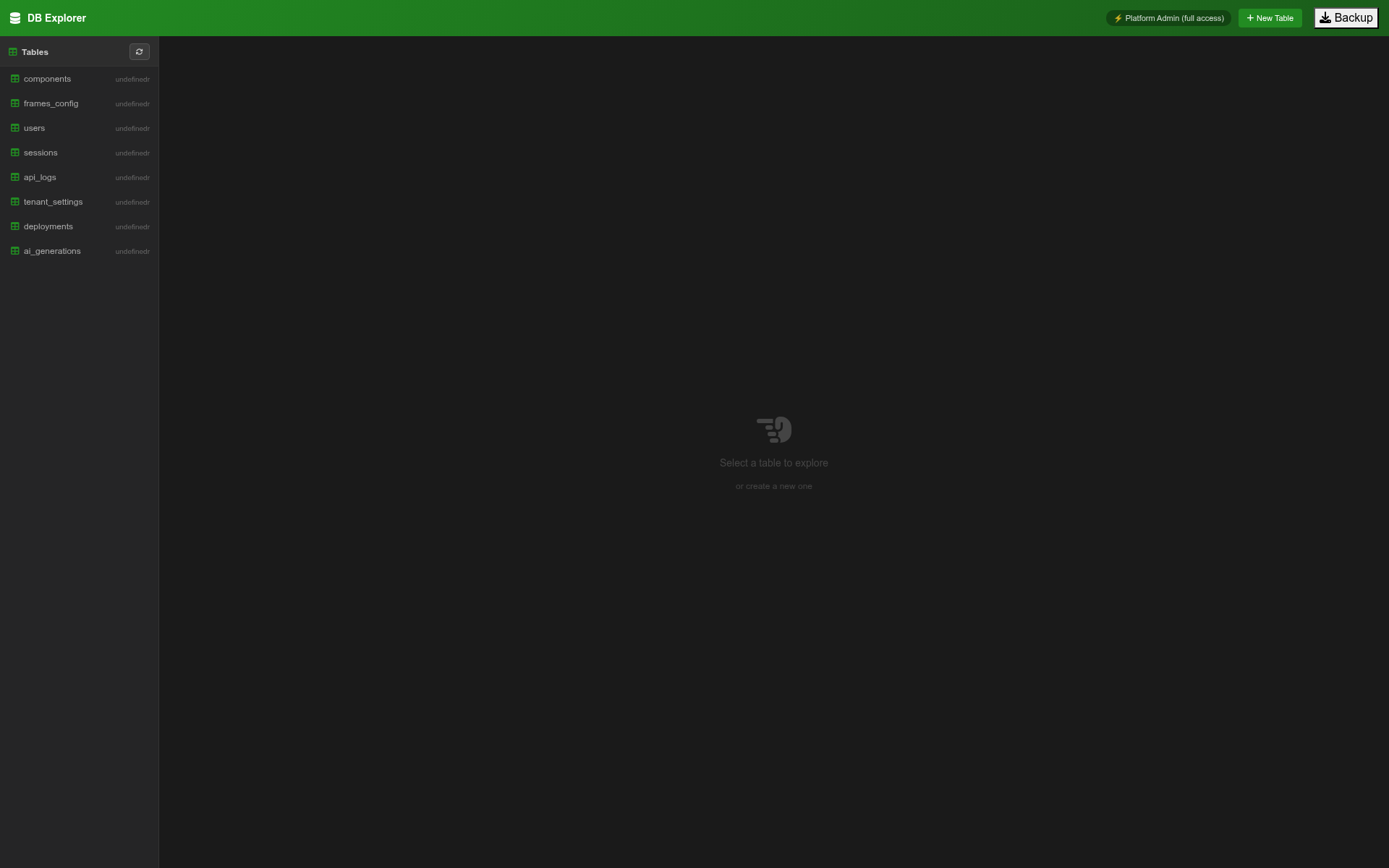

A natural-language description of the desired application is submitted to the AI Component Builder. The platform parses it into the three artifacts every component requires: a frame configuration, a front-end record, and a back-end record.

On a traditional stack, this stage produces text in a chat window. The text has to be manually copied into files, files saved to a repo, repo committed, branch pushed. Every step is an opportunity for the agent to drift out of sync with the project’s state.